Showing posts with label Deep Learning. Show all posts

Showing posts with label Deep Learning. Show all posts

Monday, April 8, 2019

Friday, April 5, 2019

Edge Computing

As a Automotive industry Machine Learning Engineer, today i thrilled about Edge Computing technology.

Edge computing to be bigger than cloud computing

Edge computing brings memory and computing power closer to the location where it is needed.

As part of my R&D have been deploying my tiny TF model on Android Apps and Embedded devices

More exciting to know Edge computing technology

https://www.sparkfun.com/products/15170

Edge computing to be bigger than cloud computing

Edge computing brings memory and computing power closer to the location where it is needed.

As part of my R&D have been deploying my tiny TF model on Android Apps and Embedded devices

More exciting to know Edge computing technology

https://www.sparkfun.com/products/15170

Wednesday, January 16, 2019

Thursday, September 20, 2018

Tuesday, September 18, 2018

Image segmentation with Intersection Over Union (IOU)

If we plot the data it looks like the below

Encoder and Decoder is the common Convolution model for Image segmentation

Plot predictions vs truth

num_samples = 20

fig = plt.figure(figsize=(10, 2*num_samples))

for i in range(0, 4*num_samples, 4):

segment_pred = model.predict(np.array([X_test[i,...]]))

ax = fig.add_subplot(num_samples, 4, i+1, xticks=[], yticks=[])

segment_truth = y_test[i,:].reshape(im_size, im_size, 2)[:,:,1]

ax.imshow(X_test[i,:].reshape(im_size, im_size, 3), interpolation='nearest')

ax = fig.add_subplot(num_samples, 4, i+2, xticks=[], yticks=[])

ax.imshow(segment_truth, interpolation='nearest')

ax = fig.add_subplot(num_samples, 4, i+3, xticks=[], yticks=[])

ax.imshow(segment_pred.reshape(im_size, im_size, 2)[:,:,1], interpolation='nearest')

ax = fig.add_subplot(num_samples, 4, i+4, xticks=[], yticks=[])

binary_pred = segment_pred.reshape(im_size, im_size, 2)[:,:,1] > 0.5

ax.imshow(binary_pred, interpolation='nearest')

binary_pred_flat = binary_pred.reshape(im_size* im_size)

segment_truth_flat = segment_truth.reshape(im_size* im_size)

intersection = np.sum((binary_pred_flat + segment_truth_flat == 2.0))

union = np.sum(np.clip((binary_pred_flat + segment_truth_flat), 0.0, 1.0))

iou = intersection/union

ax.set_title('IOU = {}'.format(iou))

plt.show()

1st column- Original flower

2nd column --Target/Ground truth

3rd column represents Our network predicted

4th column Threshold prediction with IOU

Reference link:

http://www.robots.ox.ac.uk/~vgg/data/flowers/17/

Encoder and Decoder is the common Convolution model for Image segmentation

How Do We Evaluate Semantic Segmentation Models?

- Intersection Over Union (IOU)

- IOU is a robust measure of segmentation accuracy

Plot predictions vs truth

num_samples = 20

fig = plt.figure(figsize=(10, 2*num_samples))

for i in range(0, 4*num_samples, 4):

segment_pred = model.predict(np.array([X_test[i,...]]))

ax = fig.add_subplot(num_samples, 4, i+1, xticks=[], yticks=[])

segment_truth = y_test[i,:].reshape(im_size, im_size, 2)[:,:,1]

ax.imshow(X_test[i,:].reshape(im_size, im_size, 3), interpolation='nearest')

ax = fig.add_subplot(num_samples, 4, i+2, xticks=[], yticks=[])

ax.imshow(segment_truth, interpolation='nearest')

ax = fig.add_subplot(num_samples, 4, i+3, xticks=[], yticks=[])

ax.imshow(segment_pred.reshape(im_size, im_size, 2)[:,:,1], interpolation='nearest')

ax = fig.add_subplot(num_samples, 4, i+4, xticks=[], yticks=[])

binary_pred = segment_pred.reshape(im_size, im_size, 2)[:,:,1] > 0.5

ax.imshow(binary_pred, interpolation='nearest')

binary_pred_flat = binary_pred.reshape(im_size* im_size)

segment_truth_flat = segment_truth.reshape(im_size* im_size)

intersection = np.sum((binary_pred_flat + segment_truth_flat == 2.0))

union = np.sum(np.clip((binary_pred_flat + segment_truth_flat), 0.0, 1.0))

iou = intersection/union

ax.set_title('IOU = {}'.format(iou))

plt.show()

1st column- Original flower

2nd column --Target/Ground truth

3rd column represents Our network predicted

4th column Threshold prediction with IOU

Reference link:

http://www.robots.ox.ac.uk/~vgg/data/flowers/17/

Monday, September 17, 2018

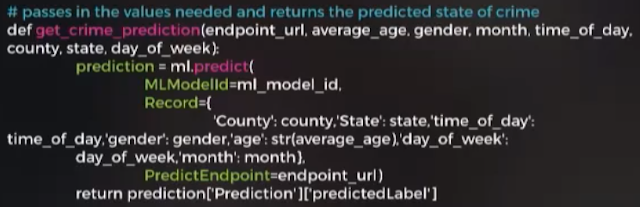

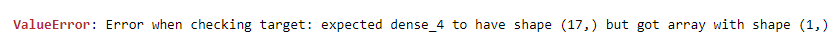

Keras: Error while training the model

While running a Keras model have encountered an error like the below

Solution:

Keras expects y-data in (N, 17) shape, not (N,) as have probably provided, that's why it raises an error.

class_index_one_hot = keras.utils.to_categorical(class_index, 17)

Output Ex: class_index_one_hot: [0. 0. 0. 0. 0. 0. 0. 0. 0. 0. 0. 0. 0. 0. 0. 0. 1.]y_train shape: (911, 17) y_test shape: (449, 17)

Reference:

Friday, September 14, 2018

Image Classification with Convolutional Neural Networks, CIFAR-10 dataset

Dataset can be found here: https://www.cs.toronto.edu/~kriz/cifar.html

with tarfile.open("D:\\NAVEED\\cifar-10\\cifar-10-python.tar.gz") as tar:

tar.extractall()

tar.close()

CIFAR10_DATASET_FOLDER = "cifar-10-batches-py"

def load_cifar10_batch(batch_id):

#with open(CIFAR10_DATASET_FOLDER + '/data_batch_' + str(batch_id), mode='rb') as file:

with open("D:\\NAVEED\\cifar-10\\cifar-10-python\\cifar-10-batches-py\\data_batch_1", mode='rb') as file:

print(file)

batch = pickle.load(file, encoding='latin1')

features = batch['data'].reshape((len(batch['data']), 3, 32, 32)).transpose(0, 2, 3, 1)

labels = batch['labels']

return features, labels

features, labels = load_cifar10_batch(1)

features.shape

kernel_size=3,strides=1, padding="SAME", activation=tf.nn.relu, name="conv1")

conv2 = tf.layers.conv2d(conv1, filters=64,

kernel_size=3, strides=2, padding="SAME", activation=tf.nn.relu, name="conv2")

conv1.shape

The dataset is broken into batches to prevent your machine from running out of memory. The CIFAR-10 dataset consists of 5 batches, named data_batch_1, data_batch_2, etc.. Each batch contains the labels and images that are one of the following:

- 0 - airplane

- 1 - automobile

- 2 - bird

- 3 - cat

- 4 - deer

- 5 - dog

- 6 - frog

- 7 - horse

- 8 - ship

- 9 - truck

with tarfile.open("D:\\NAVEED\\cifar-10\\cifar-10-python.tar.gz") as tar:

tar.extractall()

tar.close()

Load and pre-process files

- Access the image and the labels from a single batch specified by id (1-5)

- Reshape the images, the images are fed to the convolutional layer as a 4-D tensor, notice that the reshape has the channels at axis index 1

- Transpose the axes of the reshaped image to be in this form: [batch_size, height, width, channels], channels should be the last axis

CIFAR10_DATASET_FOLDER = "cifar-10-batches-py"

def load_cifar10_batch(batch_id):

#with open(CIFAR10_DATASET_FOLDER + '/data_batch_' + str(batch_id), mode='rb') as file:

with open("D:\\NAVEED\\cifar-10\\cifar-10-python\\cifar-10-batches-py\\data_batch_1", mode='rb') as file:

print(file)

batch = pickle.load(file, encoding='latin1')

features = batch['data'].reshape((len(batch['data']), 3, 32, 32)).transpose(0, 2, 3, 1)

labels = batch['labels']

return features, labels

features, labels = load_cifar10_batch(1)

features.shape

(10000, 32, 32, 3)

Access the training& test data and the corresponding labels

Each batch in the CIFAR-10 dataset has randomly picked images, so the images come pre-shuffled

train_size = int(len(features)*0.8)

training_images = features[:train_size,:,:]

training_labels = features[:train_size]

print("Training Images:",len(training_images))

training_images = features[:train_size,:,:]

training_labels = features[:train_size]

print("Training Images:",len(training_images))

print("Training Labels:",len(training_labels))

Training Images: 8000 Training Labels: 8000

test_images = features[train_size:,:,:]

test_labels = labels[train_size:]

print("Test images: ", len(test_images))

print("Test labels: ", len(test_labels))

Test images: 2000 Test labels: 2000height = 32 width = 32 channels = 3 n_inputs = height * widthPlaceholders for training data and labels

- The training dataset placeholder can have any number of instances and each instance is an array of 32x32 pixels (we've already reshaped the data earlier)

- The images are fed to the convolutional layer as a 4D tensor [batch_size, height, width, channels]

Add a dropout layer to avoid overfitting the training data

- The training flag is set to False during prediction and is True while training (dropout is applied only in the training phase)

- The dropout_rate indicates the chances that a neuron is turned off during training

y = tf.placeholder(tf.int32,shape=[None],name="y")

Neural network design

- 2 convolutional layers

- 1 max pooling layer

- 1 convolutional layer

- 1 max pooling layer

- 2 fully connected layers

- Output logits layer

- Specify the number of feature maps in each layer, a feature map highlights that area in an image which is most similar to the filter applied

- The kernel size indicates the dimensions of the filter which is applied to the image. The filter variables are created for you and initialized randomly

- The stride is the steps by which the filter moves over the input, the distance between two receptive fields on the input

- "SAME" padding indicates that the convolutional layer uses zero padding on the inputs and will consider all inputs

kernel_size=3,strides=1, padding="SAME", activation=tf.nn.relu, name="conv1")

conv2 = tf.layers.conv2d(conv1, filters=64,

kernel_size=3, strides=2, padding="SAME", activation=tf.nn.relu, name="conv2")

conv1.shape

TensorShape([Dimension(None), Dimension(32), Dimension(32), Dimension(32)])conv2.shape

TensorShape([Dimension(None), Dimension(16), Dimension(16), Dimension(64)])

Connect a max pooling layer

- The filter is a 2x2 filter

- The stride is 2 both horizontally and vertically

- This results in an image that is 1/4th the size of the original image

pool3 = tf.nn.max_pool(conv2,ksize=[1, 2, 2, 1],strides=[1, 2, 2, 1], padding="VALID") pool3.shape TensorShape([Dimension(None), Dimension(8), Dimension(8), Dimension(64)]) conv4 = tf.layers.conv2d(pool3, filters=128, kernel_size=4, strides=3, padding="SAME", activation=tf.nn.relu, name="conv4") conv4.shape TensorShape([Dimension(None), Dimension(3), Dimension(3), Dimension(128)])

Reshape the pooled layer to be a 1-D vector (flatten it)

pool5 = tf.nn.max_pool(conv4, ksize=[1, 2, 2, 1], strides=[1, 1, 1, 1],padding="VALID") pool5.shape TensorShape([Dimension(None), Dimension(2), Dimension(2), Dimension(128)]) pool5_flat = tf.reshape(pool5, shape=[-1, 128 * 2 * 2]) fullyconn1 = tf.layers.dense(pool5_flat, 128, activation=tf.nn.relu, name="fc1") fullyconn2 = tf.layers.dense(fullyconn1, 64, activation=tf.nn.relu, name="fc2")

Reference links: https://github.com/tflearn/tflearn/issues/57The final output layer with softmax activation

Do not apply the softmax activation to this layer. The tf.nn.sparse_softmax_cross_entropy_with_logits will apply the softmax activation as well as calculate the cross-entropy as our cost functionlogits = tf.layers.dense(fullyconn2, 10, name="output")xentropy = tf.nn.sparse_softmax_cross_entropy_with_logits(logits=logits, labels=y)

loss = tf.reduce_mean(xentropy)optimizer = tf.train.AdamOptimizer()training_op = optimizer.minimize(loss)Check correctness and accuracy of the prediction

correct = tf.nn.in_top_k(logits, y, 1) accuracy = tf.reduce_mean(tf.cast(correct, tf.float32))

- Check whether the highest probability output in logits is equal to the y-label

- Check the accuracy across all predictions (How many predictions did we get right?)

init = tf.global_variables_initializer() saver = tf.train.Saver()Set up a helper method to access training data in batches

def get_next_batch(features, labels, train_size, batch_index, batch_size): training_images = features[:train_size,:,:] training_labels = labels[:train_size] test_images = features[train_size:,:,:] test_labels = labels[train_size:] start_index = batch_index * batch_size end_index = start_index + batch_size return features[start_index:end_index,:,:], labels[start_index:end_index], test_images, test_labelsTrain and evaluate the model

n_epochs = 10 batch_size = 128 with tf.Session() as sess: init.run() for epoch in range(n_epochs): # Add this in when we want to run the training on all batches in CIFAR-10 for batch_id in range(1, 6): batch_index = 0 features, labels = load_cifar10_batch(batch_id) train_size = int(len(features) * 0.8) for iteration in range(train_size // batch_size): X_batch, y_batch, test_images, test_labels = get_next_batch(features, labels, train_size, batch_index, batch_size) batch_index += 1 sess.run(training_op, feed_dict={X: X_batch, y: y_batch, training: True}) acc_train = accuracy.eval(feed_dict={X: X_batch, y: y_batch}) acc_test = accuracy.eval(feed_dict={X: test_images, y: test_labels}) print(epoch, "Train accuracy:", acc_train, "Test accuracy:", acc_test) save_path = saver.save(sess, "./my_mnist_model")

- For smaller training data you'll find that the model performs poorly, it improves as you increase the size of the training data (use all batches)

- Ensure that dropout is enabled during training to avoid overfitting

9 Train accuracy: 0.73125 Test accuracy: 0.7135

Subscribe to:

Comments (Atom)